How NAS Storage Solutions Optimize Data Placement Using Access Pattern Intelligence?

- Mary J. Williams

- Apr 13

- 4 min read

Managing unstructured data at scale requires precision. As enterprise workloads grow, IT administrators face the continuous challenge of balancing performance requirements against infrastructure costs. Storing all files on high-performance flash arrays is financially inefficient, while relying solely on high-capacity spinning disks creates unacceptable latency bottlenecks. To resolve this structural conflict, engineers have developed sophisticated mechanisms that dynamically migrate files based on their actual usage.

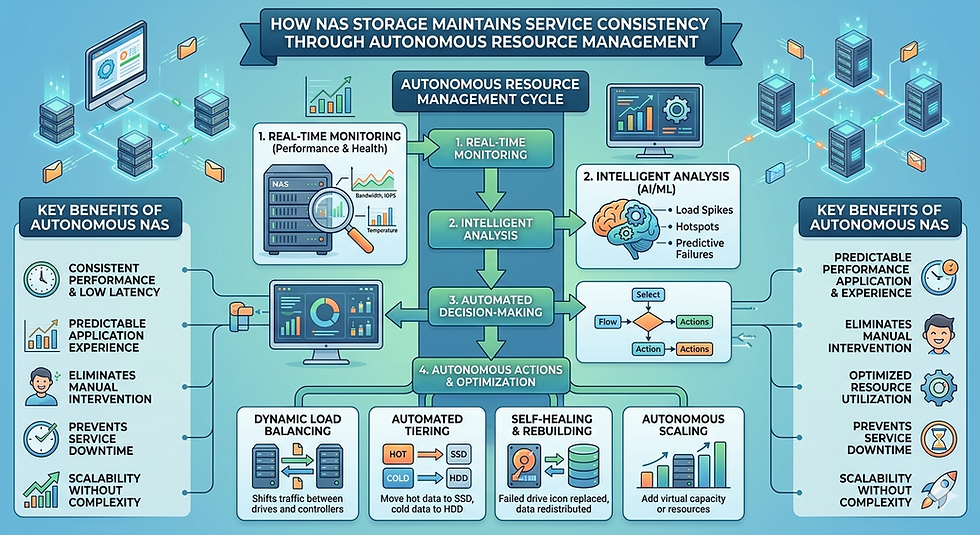

By observing exactly how applications and users interact with files over time, infrastructure administrators can make informed decisions about where those files should physically reside. This concept, known as access pattern intelligence, represents a major shift in storage architecture. Rather than relying on static policies or manual intervention, modern environments continuously monitor read and write requests. They use this telemetry data to position information exactly where it needs to be, precisely when it is required.

Understanding the mechanics of this capability allows technology leaders to design more resilient, cost-effective, and highly performant architectures.

The Architecture of Intelligent Data Management

Historically, data tiering was a rigid process. Administrators would set basic rules, such as moving any file that had not been modified in ninety days to a cheaper archive tier. This binary approach failed to account for complex workload behaviors. For example, a reference file might be read thousands of times a day but never modified, triggering an incorrect migration to slow storage under traditional rules.

Advanced NAS storage solutions solve this problem by introducing granular telemetry collection at the file system level. These architectures do not just look at creation or modification dates. They track read frequencies, write bursts, user concurrency, and application-specific I/O sizes. By gathering this metadata, the infrastructure builds a comprehensive behavioral profile for every block of data it manages.

Implementing these advanced NAS storage solutions fundamentally changes how an organization handles capacity planning. Instead of guessing which volumes will require expensive solid-state drives, the infrastructure autonomously aligns resources with actual demand.

Decoding Access Pattern Intelligence

Access pattern intelligence relies on continuous background analysis. The core engine monitors the I/O stream, logging metadata about every transaction. When a user requests a file, a typical Nas System records the timestamp, the nature of the request, and the specific blocks accessed.

Over time, this continuous logging creates a heat map of the entire directory structure. The algorithms identify "hot" data—files experiencing intense, concurrent I/O that require sub-millisecond latency. Conversely, they identify "cold" data that consumes capacity but sees little to no active usage.

Crucially, a modern Nas System goes beyond simple frequency counting. It utilizes predictive heuristics. If a specific directory typically experiences a massive spike in read requests during the last week of the financial quarter, the intelligence engine can recognize this recurring pattern. It can then proactively promote the relevant data to high-performance tiers before the application even requests it, ensuring zero performance degradation during critical business operations.

The Mechanics of Automated Data Placement

Once the access patterns are fully analyzed, the infrastructure must physically move the blocks to their optimal location. This data placement occurs seamlessly in the background, entirely transparent to the end-user and the application.

Hot, Warm, and Cold Data Classification

The placement engine categorizes storage media into discrete tiers. NVMe and SSD arrays handle the hot data. Standard SAS drives manage "warm" data that requires moderate access speeds. Finally, high-density SATA drives or cloud object storage repositories hold the cold, archival data.

When leading NAS storage solutions detect a file transitioning from hot to cold, they schedule a block-level migration. The migration process utilizes idle CPU cycles and available network bandwidth to move the data without disrupting active workloads. Because the namespace remains unified, the file path never changes. Users continue to access \\server\share\file.doc exactly as they always have, completely unaware that the physical blocks now reside on a completely different hardware tier.

This seamless mobility is the primary advantage of utilizing advanced NAS storage solutions. It removes the operational burden of manual data migrations and eliminates the need for complex stub files or symbolic links that frequently break during system upgrades.

Performance and Cost Benefits

The financial impact of access pattern intelligence is substantial. Organizations typically discover that 70 to 80 percent of their unstructured data is cold. By automatically migrating this vast majority of files to low-cost, high-density tiers, the overall cost per terabyte drops dramatically.

Simultaneously, the performance of active workloads improves. Because the high-speed flash tiers are no longer cluttered with dormant files, they can dedicate their full IOPS capacity to the specific applications that truly require rapid access. A well-configured Nas System ensures that the expensive, high-performance resources are exclusively reserved for the tasks that generate revenue or drive critical business outcomes.

Furthermore, this automated placement extends the lifecycle of existing hardware. By optimizing capacity utilization across all available tiers, administrators can delay expensive forklift upgrades and extract maximum value from their current infrastructure investments.

Frequently Asked Questions

What happens if cold data is suddenly requested?

If an application requests a file that has been migrated to a cold tier, the Nas System retrieves it immediately. While the initial read might incur a slight latency penalty compared to flash storage, the intelligence engine instantly registers this new activity. If the access pattern suggests sustained usage, the file is automatically promoted back to a high-performance tier.

Does background data migration impact network performance?

Enterprise-grade NAS storage solutions are designed to throttle their internal migration processes. They monitor the primary I/O queues and only execute block movements when there is sufficient idle bandwidth. This ensures that background tiering never starves active client requests or degrades application performance.

Can administrators override the automated placement algorithms?

Yes. While the algorithms handle the majority of tasks efficiently, administrators can pin specific volumes, directories, or files to a particular tier. This is often necessary for strict compliance mandates or highly specific application requirements where a particular Nas System must guarantee absolute performance parameters regardless of historical access patterns.

Next Steps for Data Infrastructure Architecture

Relying on manual capacity management is no longer a viable strategy for enterprise environments. The sheer volume and velocity of unstructured data require automated, intelligent systems that can adapt to changing workload demands in real-time.

By leveraging access pattern intelligence, technology leaders can build highly efficient, scalable, and cost-effective environments. The telemetry gathered by these systems eliminates guesswork, ensuring that infrastructure investments are precisely aligned with actual business requirements. Evaluate your current telemetry capabilities and consider implementing dynamic placement protocols to secure a more resilient and optimized architectural framework for your organization.

Comments