Software-Defined NAS Storage: Building Container-Ready, Kubernetes-Integrated Data Platforms for Modern Workloads

- Mary J. Williams

- Feb 20

- 5 min read

The shift to containerized applications has fundamentally changed how organizations approach data storage. Traditional storage solutions, built for static workloads and predictable growth patterns, struggle to keep pace with the dynamic, distributed nature of Kubernetes environments.

Software-defined NAS storage offers a solution designed specifically for modern infrastructure demands. By decoupling storage software from hardware and integrating directly with container orchestration platforms, these systems provide the flexibility, scalability, and automation that cloud-native applications require. Organizations running Kubernetes workloads need storage that can scale horizontally, provision resources on-demand, and maintain data consistency across distributed clusters—capabilities that legacy NAS systems simply weren't built to deliver.

This post explores how software-defined NAS storage works within Kubernetes environments, the advantages of scale-out architectures, and practical considerations for deploying NAS in AWS cloud infrastructure.

Understanding Software-Defined NAS Storage

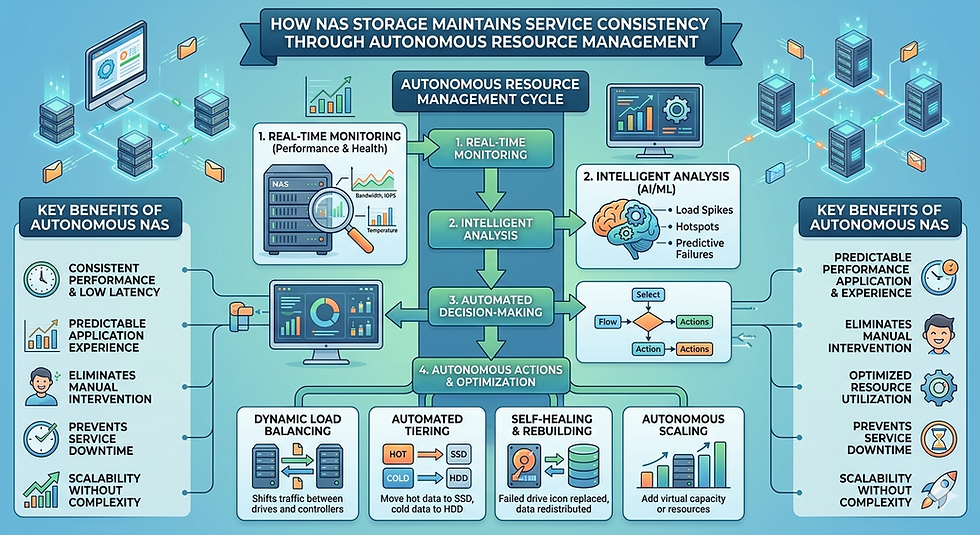

Software-defined NAS storage separates the control plane from the underlying hardware, allowing organizations to manage storage resources through APIs and policy-based automation rather than manual configuration. This approach transforms storage from a rigid infrastructure component into a programmable resource that can adapt to application needs in real-time.

Unlike traditional NAS systems that rely on proprietary hardware and fixed capacity, software-defined solutions run on commodity servers or cloud instances. Storage administrators define policies for data protection, performance tiers, and access controls through software interfaces, while the system handles the complexity of data placement, replication, and scaling automatically.

For Kubernetes environments, this flexibility proves essential. As pods spin up and down based on demand, storage volumes need to be created, attached, and released without manual intervention. Software-defined NAS storage integrates with Kubernetes through Container Storage Interface (CSI) drivers, enabling seamless orchestration of persistent storage alongside compute resources.

Scale-Out NAS Storage Architecture

Scale-out NAS storage distributes data across multiple nodes in a cluster, allowing capacity and performance to grow linearly as you add hardware. Rather than hitting the ceiling of a single controller's capabilities, these systems aggregate resources from every node to deliver higher throughput and accommodate larger datasets.

Each node in a scale-out architecture contributes both storage capacity and processing power. When you add a new node, the system automatically rebalances data across the expanded cluster, increasing available IOPS and bandwidth without downtime. This horizontal scaling model aligns naturally with Kubernetes' approach to application scaling, where workloads spread across multiple pods and nodes.

The distributed nature of scale-out NAS storage also improves fault tolerance. Data gets replicated across multiple nodes according to configured policies, so a single node failure doesn't result in data loss or service interruption. The system continues operating while failed components are replaced, maintaining availability for critical containerized applications.

Performance benefits extend beyond simple capacity additions. With multiple nodes serving data simultaneously, scale-out architectures eliminate bottlenecks common in dual-controller systems. Large file operations or high-concurrency workloads can leverage the aggregate bandwidth of the entire cluster rather than being constrained by a single controller's network or storage interfaces.

Kubernetes Integration for Container-Ready Storage

Modern NAS storage platforms integrate with Kubernetes through CSI drivers that expose storage capabilities directly to the orchestration layer. When a pod requires persistent storage, Kubernetes can automatically provision a volume from the NAS system, attach it to the appropriate node, and make it available to the container—all without human intervention.

This automation extends to the full storage lifecycle. Dynamic provisioning creates volumes on-demand based on StorageClass definitions that specify performance characteristics, data protection policies, and access modes. When pods are deleted, volumes can be retained for later use or automatically reclaimed according to policy.

Snapshot and cloning capabilities built into software-defined NAS storage accelerate development workflows. Developers can quickly create test environments by cloning production data, while automated snapshots provide rapid recovery points without impacting application performance. These operations happen at the storage layer but are triggered through standard Kubernetes APIs, maintaining a consistent operational model.

StatefulSets, which manage pods that require stable storage identities, benefit particularly from NAS integration. Each pod in a StatefulSet can receive its own persistent volume that moves with the pod across node failures or rescheduling events. The NAS system handles the complexity of maintaining data accessibility regardless of which Kubernetes node the pod lands on.

Deploying NAS in AWS Cloud

Running NAS in AWS Cloud environments requires balancing the benefits of elastic infrastructure against the performance characteristics of cloud networking and storage. Several deployment models exist, each with distinct tradeoffs for latency, cost, and operational complexity.

Amazon FSx for NetApp ONTAP provides fully managed NAS storage that integrates with existing AWS services while offering enterprise features like snapshots, replication, and multi-protocol access. This service handles infrastructure management, patches, and upgrades, allowing teams to focus on application development rather than storage operations.

For organizations requiring more control, deploying software-defined NAS storage on EC2 instances offers greater flexibility in configuration and potentially lower costs at scale. Solutions designed for cloud deployment can use EBS volumes as backing storage while presenting NFS or SMB shares to Kubernetes clusters. This approach requires managing the NAS software stack but provides full control over scaling, performance tuning, and feature adoption.

Network topology significantly impacts NAS performance in AWS. Placing NAS storage nodes and Kubernetes worker nodes in the same availability zone minimizes latency, though it reduces fault tolerance. Multi-AZ deployments improve resilience but introduce cross-AZ data transfer costs and higher latencies that can affect application performance.

Storage tiering strategies help optimize costs in cloud environments. Frequently accessed data remains on high-performance EBS volumes, while less active datasets automatically move to lower-cost S3 storage through lifecycle policies. Software-defined NAS storage platforms with cloud-aware tiering can make these transitions transparently to applications, maintaining a single namespace while reducing storage expenses.

Key Considerations for Production Deployments

Successful NAS storage deployments in Kubernetes environments require careful planning around performance, data protection, and operational integration. Application workload characteristics should drive storage architecture decisions rather than following generic best practices.

Performance requirements vary dramatically between use cases. Database workloads demand low-latency random I/O, while machine learning training jobs prioritize high sequential throughput for reading large datasets. Understanding these patterns helps inform decisions about storage tiers, caching strategies, and whether workloads are better suited to NAS or block storage backends.

Data protection policies must account for both infrastructure failures and logical corruption or deletion. While scale-out architectures provide hardware redundancy, application-consistent backups and disaster recovery planning remain essential. Integration with backup tools that understand Kubernetes primitives ensures complete recovery capabilities for both persistent volumes and application configurations.

Monitoring and observability become more complex in distributed storage environments. Effective monitoring captures metrics at multiple levels: storage cluster health, individual volume performance, and application-level latency as experienced by pods. Correlating these metrics helps identify whether performance issues stem from storage constraints, network congestion, or application behavior.

Building Future-Ready Storage Infrastructure

Software-defined NAS storage represents a fundamental shift in how organizations provision and manage persistent data for containerized workloads. By embracing software-defined principles and scale out NAS storage architectures, these platforms deliver the agility, horizontal scalability, and automation that Kubernetes-based applications demand.

Organizations evaluating storage options should prioritize solutions that integrate natively with Kubernetes, support horizontal scaling, and operate effectively in their chosen environment—whether on-premises, in AWS cloud, or across hybrid infrastructure. The right storage platform becomes invisible to developers while providing the reliability and performance that production workloads require.

As container adoption accelerates, the gap between application infrastructure and storage capabilities will only widen for organizations relying on legacy systems. Now is the time to assess whether your storage architecture can support the next generation of cloud-native applications.

Comments